The consideration of automating the processes in a data warehouse (DWH) as far as possible keeps many project teams busy today.

The desire for a comprehensive automation solution usually arises very quickly.

In this multi-part blog post, I describe what expectations are placed on an automation solution, what the manufacturers promise, and what the reality looks like at the end. In the first part, I described the project teams' expectations of automation.

The promises of automation vendors and the first negative examples in the tool selection process are the focus of this second part of the mini-series.

Project teams looking for an automation solution are spoiled for choice. There are now many products on the market: established solutions and newcomers as well as countless in-house developments.

It is not uncommon for teams to experience disappointment after product selection or product launch. One of the main causes for this are the pledges made by the salespeople of an automation solution during the sales process.

Automation vendor pledges

When selecting vendors for an automation solution, there always comes a point when project teams invite vendors to a demo or product presentation.

Presentations show the ideal world, demos are precisely tailored to the functionalities of an automation solution on display. Regardless of whether it is an established solution, a start-up or a consulting firm's own development. This is how it should be! After all, all automation solutions pursue (more or less) the same goal.

A good salesperson asks what the project team expects from the automation solution. This is exactly where the vendor's pledges come into play as a response to the existing expectations:

- "Of course we support Data Vault modeling!" - But how? And is that what the project team expects?

- "We can automate (everything)!" - But what exactly? A search on the Internet shows how many tools there are that have the keyword automation in their description, but are completely different than expected.

- "Our solution works right out of the box!" - Quick install and that's it?

- "We can do continuous delivery! You don't have to worry about anything!"

- "All code generation 'out of the box'! No customizations needed!"

The answers to other expectations of the project team could be as follows:

"When it comes to orchestration, continuous delivery of artifacts, and development speed - our solution maps all of that and almost runs itself. There are just a few small things to do and that's it."

"Generate Data Vault tables? Sure, in all variations!"

If the project team asks more specifically about the capabilities of domain-oriented data modeling, the answer might be:

"Our solution supports domain-oriented data modeling!"

Everything is promised and usually much, much more. Some promises may be meant in a different context, but that doesn't matter, does it?

We all know these pledges, we all know the need to evaluate solutions objectively, and at the same time we are easily convinced that the solution being presented is the best one.

I don't want to create a wrong impression. Every solution has its raison d'être, all statements and promises made can be correct, accurate and complete in a given context. In this sense, there is no bad or good automation solution - only one that fits the situation better or less well.

This is why it is so important as a project team to be clear about what is expected from an automation solution and according to which criteria it can be objectively evaluated.

These are just some of the promises I have encountered over the years. And yet, they are also the reasons to invest in an automation solution.

Case studies

Im Folgenden stelle ich einige reale Fälle vor, die ich in den letzten Jahren erlebt habe. Zuerst nur einige negative Fälle. Dann aber auch ein positives Beispiel für eine gelungene Auswahl einer Automatisierungslösung.

First case

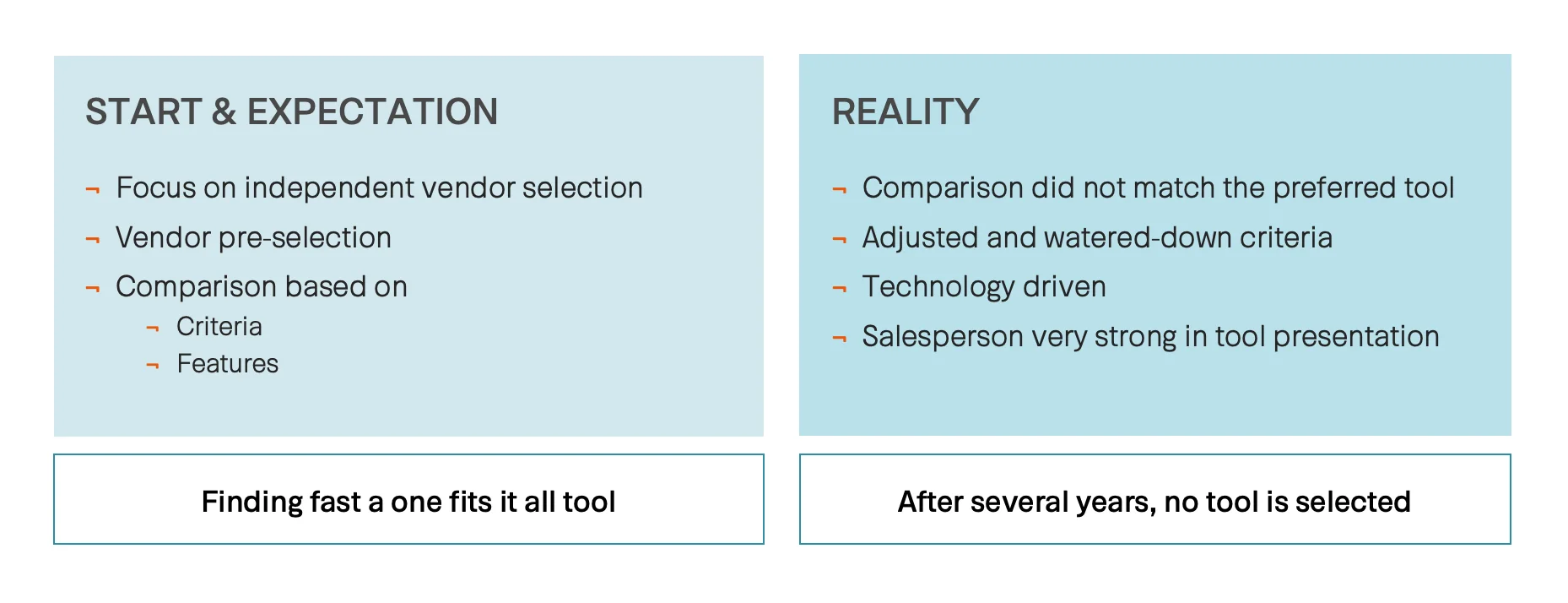

In the first case, a good set of instruments was created from the beginning. The project team, let's call it Team A, conducted a comprehensive selection process, starting with a long list of automation vendors and whittling it down to four vendors or four automation solutions.

Team A was quite proud, and rightly so, of their accomplishment in creating a defined set of really good selection criteria, weighting them, and adding any information they received from the vendors (in writing or in interviews) as additional information to the selection criteria. Team A's goal was to find an automation solution that met the company's needs as quickly as possible.

Actually, a good starting situation, right? However, and this is the other side of the coin, Team A secretly had a preferred automation solution. This was neither transparent nor obvious throughout the entire process.

How could this have happened? The promises made by the vendors were crucial. I have great respect for the performance and persuasiveness of some salespeople, I honestly admit. In the case described here, the presentation of an automation solution was so good that the participating members of Team A defined this solution as their desired solution and did not deviate from it in the further course.

Back to case 1: The results of the selection process - which was carried out really well - did not, of course, correspond to the preferred automation solution, but they did correspond to the original requirements criteria.

What happened next? The criteria from the previous selection questionnaire were adapted and watered down until all criteria corresponded to the desired automation solution. And that's how it ended up in first place!

In addition, technology-oriented thinking again gained the upper hand. That is, Team A focused more on the technological features of the automation solutions than on the actual requirements defined by use cases, data architecture, and other criteria.

And what was the result in case 1? Today, after several years, Team A or the company still has not chosen an automation solution. The selected automation solution did not pass the subsequent proof of concept (PoC) or did not work as expected. Time passed, Team A decided on a different automation solution, the PoC failed, and so on.

From my point of view, the biggest mistake here in the first case was that Team A no longer focused on the criteria and functions in the selection process, but instead made the decision for an automation solution driven by emotions and some fancy features.

The third and final part of this blog post will cover the other cases for selecting an automation solution and my thoughts on them.

Be sure to check back.

Until then

Your Dirk