"Do we actually still need data modelers if AI can take over?" The question came up after a coaching session. I paused. Not because the question was fundamentally wrong — but because it was the wrong question.

The right question is: what stays — and what's new? And the answer isn't unsettling. It's actually pretty interesting. Because the tasks AI is taking over were rarely the ones data modelers found most rewarding. What remains — and what's coming — is more demanding, more strategic, and closer to the actual value of the work.

The first three articles in this series showed why AI cannot automatically create information models, where generic automation falls short, and what a hybrid workflow looks like that puts AI to genuinely good use. This fourth and final article turns the lens inward: what does all of this mean for the role itself?

The shift: what's changing

For a long time, the data modeler's role had a clear center of gravity: gather requirements, document, structure, implement. A craft-driven job that rewarded diligence and precision. AI is targeting exactly that — the repetitive, documentation-heavy parts. Transcription, initial object identification, template population, physical model generation: these are areas where AI can now make a genuine contribution.

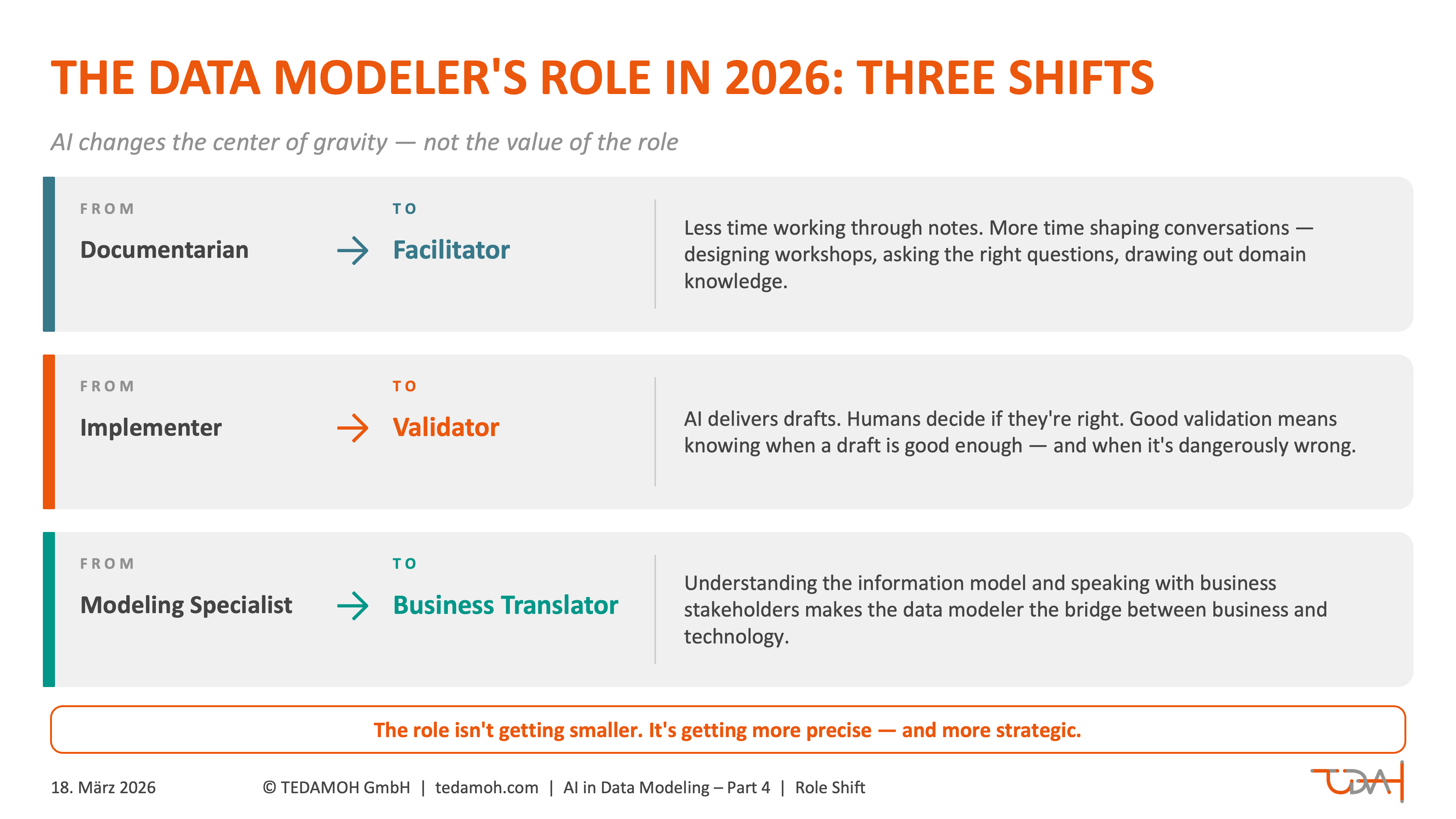

What does that mean? Not that the job disappears. It means the center of gravity shifts — in three directions:

What does that mean? Not that the job disappears. It means the center of gravity shifts — in three directions:

From documentarian to facilitator. The data modeler spends less time working through conversations after the fact, and more time shaping them in the moment. Which questions need to be asked in a workshop? Who needs to be in the room? What's still unclear — and how do you draw it out? That's not administrative work. That's guided knowledge extraction.

From implementer to validator. AI delivers drafts. Humans decide whether those drafts are right. That sounds straightforward — it isn't. Good validation means knowing when a draft is good enough, and when it's dangerously wrong. That requires domain knowledge, experience, and an intuition for the business that can't be automated.

From modeling specialist to business translator. Someone who understands the information model and can also speak with business stakeholders becomes the bridge between business and technology. This role isn't new — but it's becoming more important as AI abstracts away more and more of the technical side.

Competencies in transition

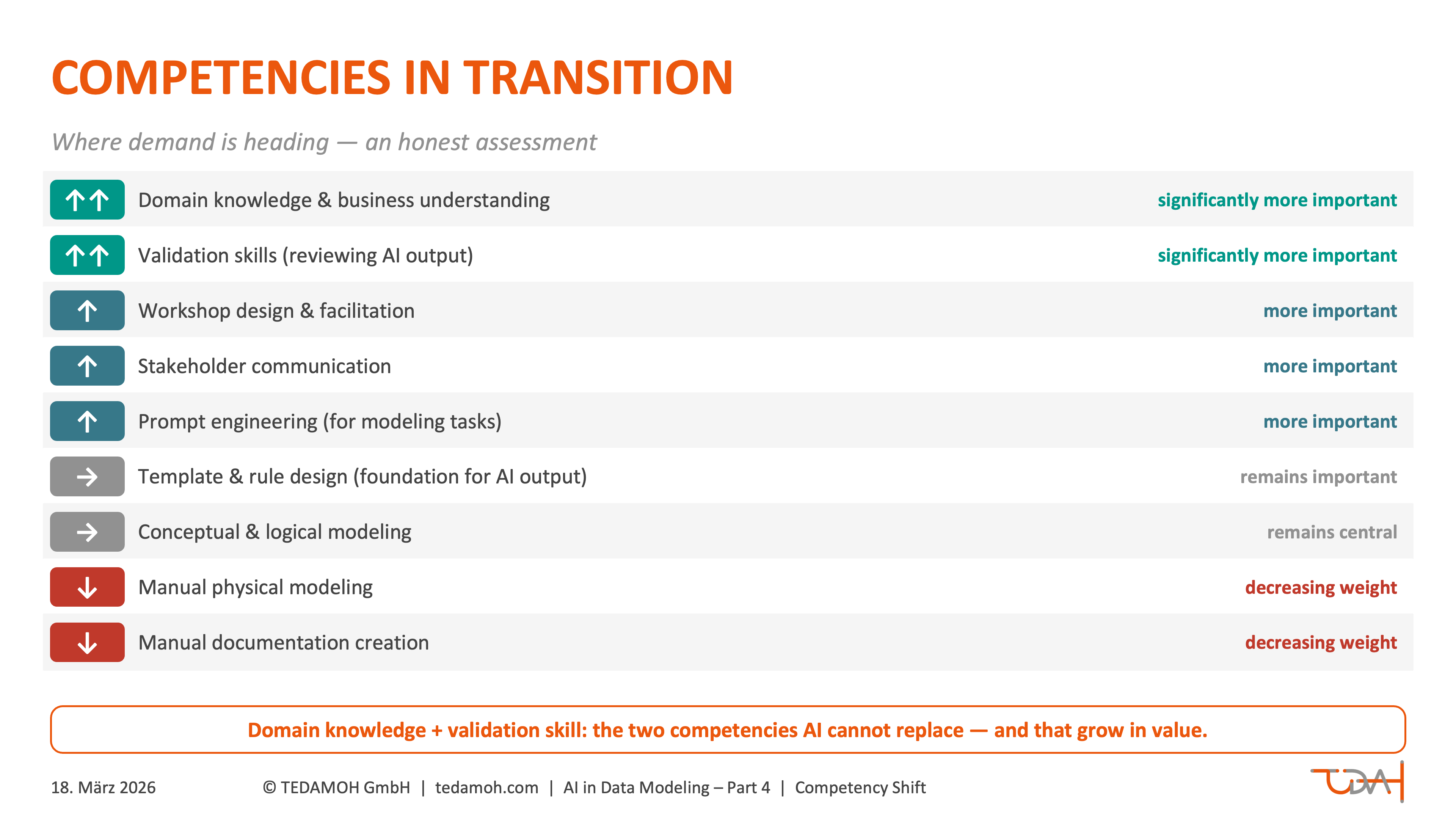

Which skills become more valuable in this new context — and which are losing weight? The overview below isn't a judgment about individuals. It's an assessment of where demand is heading.

| Competency | Trend |

|---|---|

| Domain knowledge & business understanding | ↑↑ significantly more important |

| Validation skills (reviewing AI output) | ↑↑ significantly more important |

| Workshop design & facilitation | ↑ more important |

| Stakeholder communication | ↑ more important |

| Prompt engineering (for modeling tasks) | ↑ more important |

| Manual physical modeling | ↓ decreasing weight |

| Manual documentation creation | ↓ decreasing weight |

| Conceptual & logical modeling | → remains central |

| Template & rule design (foundation for useful AI output) | → remains important |

Want the complete series as a PDF? No problem! Get Data Modeling with AI as a free TEDAMOH Publication. Click the link below and save it for later — read offline, share with your team, whatever works for you.

Download: Coming soon

A note on the last row: template and rule design means creating the foundation within which AI can do useful work. Who decides how an entity definition template is structured? Which fields are mandatory, what order applies, which naming conventions are binding in this organization? These decisions are made by humans — and the quality of AI output depends directly on how well that foundation is designed.

Two points deserve particular attention. First: domain knowledge isn't the same as industry knowledge. It means understanding your specific organization's business model deeply enough to recognize that an AI-generated definition of "customer" is too generic — and to ask the right question that draws out what's missing. That's not a given. It's a core competency.

Second: validation skill is its own capability, one that needs to be actively developed. It doesn't just mean "is this correct?" — it also means: "how would I know if it weren't?" Anyone who has learned to systematically challenge AI output protects the entire team from silent errors that embed themselves in a model and only surface much later.

What disappears — what emerges

An honest inventory, without spin:

An honest inventory, without spin:

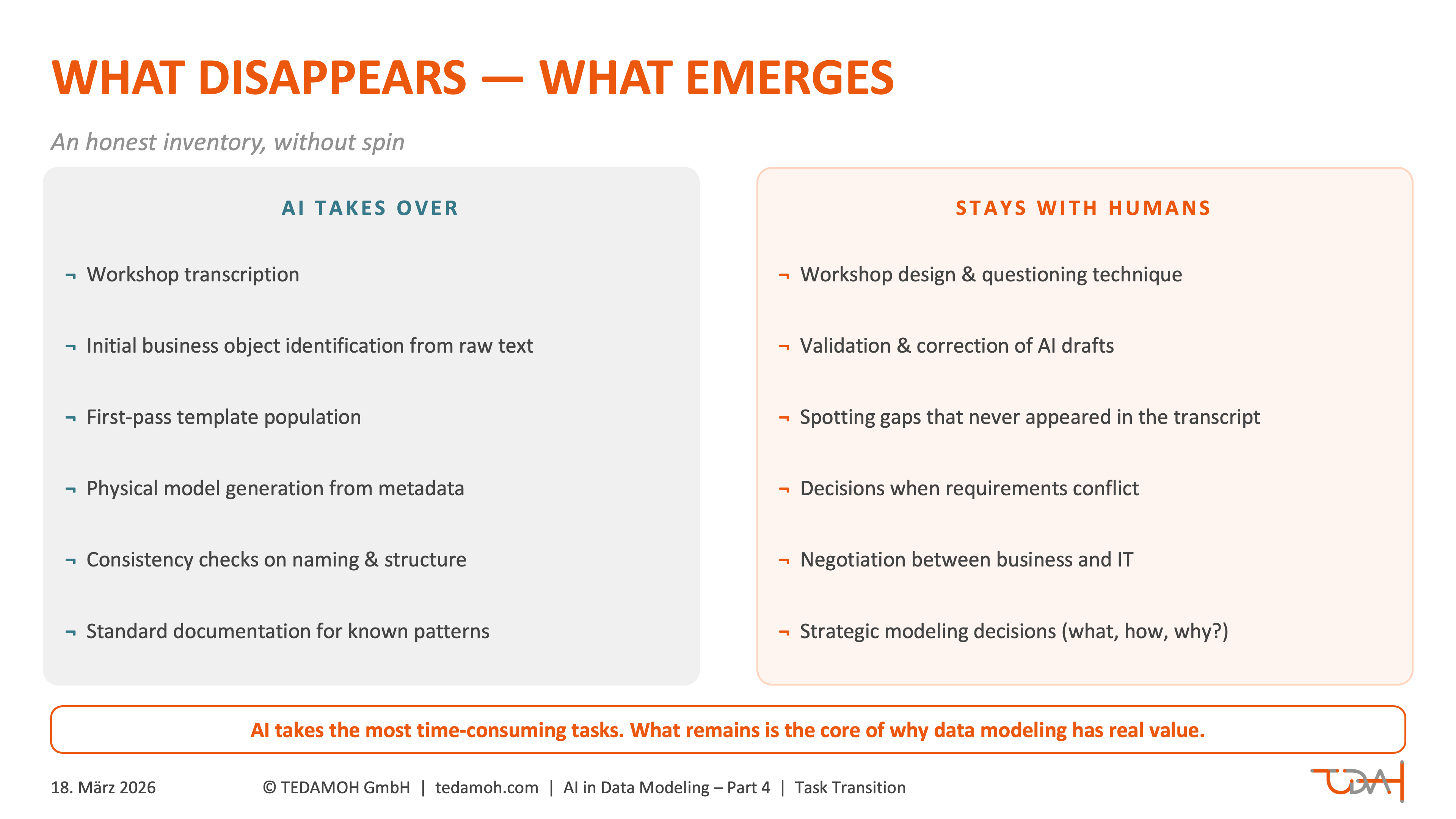

What AI is increasingly taking over: workshop transcription, initial identification of business objects from raw text, first-pass population of entity definition templates, generation of physical models from defined metadata, consistency checks on naming conventions and structural patterns, standard documentation for known modeling patterns.

What stays with humans: workshop design and questioning technique, validation and correction of AI drafts, spotting gaps that never appeared in the transcript, decisions when requirements conflict, negotiation between business and IT, strategic modeling decisions (what gets modeled how — and why?), judgment about when a model is good enough and when it needs more work.

About this series: This is Part 4 of 4 in our series on AI-assisted data modeling. Part 1 examines the fundamental paradox. Part 2 explains why generic automation fails. Part 3 walks through the hybrid 4-step workflow.

Want to go deeper?

In our Data Modeling Training, you'll learn how to develop company-specific data models — beyond generic templates and standard schemas.

What stands out: the tasks AI is taking over were often the most time-consuming — and rarely the most interesting. What remains is the core of why data modeling has real value: understanding business logic, making good decisions under uncertainty, connecting two worlds that talk past each other without a translator.

Bringing the team along

No transition happens in a vacuum. Anyone working with a team will get questions — some spoken, some not. "Is my position being cut?" "Do I need to learn how to code now?" "What am I supposed to do with these AI tools — I only just figured out the old modeling software?"

No transition happens in a vacuum. Anyone working with a team will get questions — some spoken, some not. "Is my position being cut?" "Do I need to learn how to code now?" "What am I supposed to do with these AI tools — I only just figured out the old modeling software?"

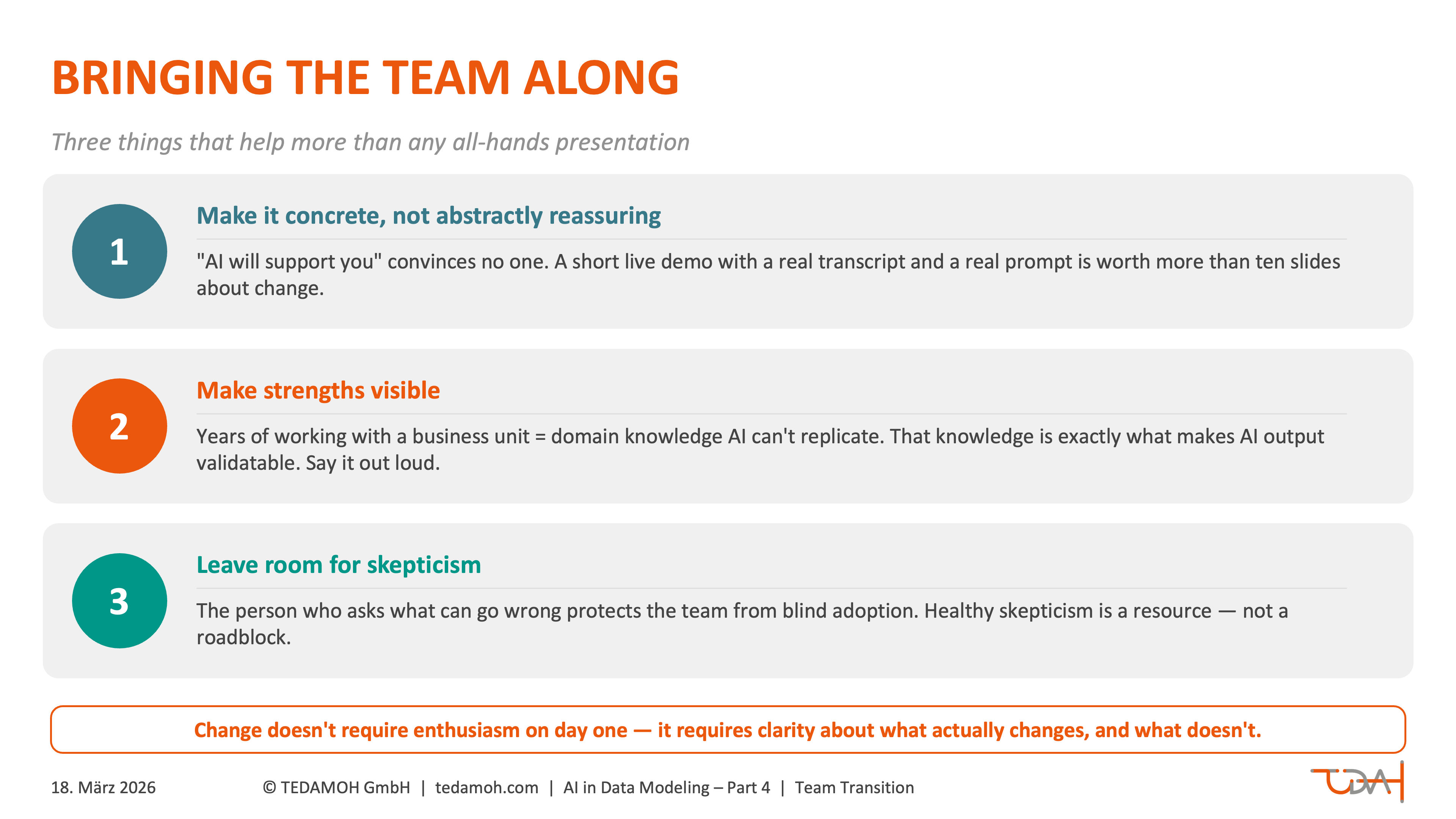

Three things help more than any all-hands presentation:

Make it concrete, not abstractly reassuring. "AI will support you" sounds good but convinces no one. Showing specifically which task will be AI-assisted — and what that actually looks like — is far more effective. A short live demo with a real transcript and a real prompt is worth more than ten slides about change.

Make strengths visible. Someone who has worked closely with a particular business unit for years and understands its data logic has a competitive advantage that AI doesn't shrink — it grows it. That domain knowledge is exactly what makes AI output validatable in the first place. That needs to be said out loud.

Leave room for skepticism. Not everyone needs to be immediately enthusiastic. The person who first asks what can go wrong is protecting the team from blind adoption. Healthy skepticism is a resource, not a roadblock.

A new opportunity: finally doing information modeling

There's one more perspective that often gets lost in this conversation — and it's actually the most optimistic one: AI makes information modeling genuinely accessible for many teams for the first time.

Let's be honest. In many organizations, information modeling has been avoided — not because no one understood its value, but because the effort seemed too high. Trying to capture everything during the conversation, listening back through hours of recordings, pulling objects out of handwritten notes, filling templates by hand, endless alignment rounds. Anyone asked to build an information model alongside a live project usually pushed it to "someday." Someday, as everyone knows, never comes.

Let's be honest. In many organizations, information modeling has been avoided — not because no one understood its value, but because the effort seemed too high. Trying to capture everything during the conversation, listening back through hours of recordings, pulling objects out of handwritten notes, filling templates by hand, endless alignment rounds. Anyone asked to build an information model alongside a live project usually pushed it to "someday." Someday, as everyone knows, never comes.

AI removes exactly these obstacles. The effort for the repetitive steps drops dramatically. What used to mean an afternoon per object is now an hour as a draft. What used to mean weeks of manual follow-up after a workshop now happens in hours.

That means teams that have done little or no information modeling before — because it was simply too much of a burden — now have a real entry point. Not as a compliance exercise, but as a workable process that fits into everyday project life.

And in times of skills shortages, there's another dimension: when AI handles the routine work, a single experienced data modeler can support significantly more projects than before. The expertise doesn't need to be multiplied — it needs to be deployed more precisely. That's not displacement. That's scale.

Conclusion: a role that's becoming more important

Back to the question from the beginning. Do we still need data modelers? Yes — but not for the same things as before. AI takes over the work that repeats. What remains is the work that requires judgment, experience, and understanding how things connect. Exactly what good data modelers have always used to make the difference.

Back to the question from the beginning. Do we still need data modelers? Yes — but not for the same things as before. AI takes over the work that repeats. What remains is the work that requires judgment, experience, and understanding how things connect. Exactly what good data modelers have always used to make the difference.

The role isn't getting smaller. It's getting more precise. And anyone who understands how to deploy AI sensibly — and where not to — is more valuable to their organization than ever before.

That's the final article in this series. If you're interested in how solid data modeling directly affects time-to-market and competitive advantage, stay tuned — more on that is coming.

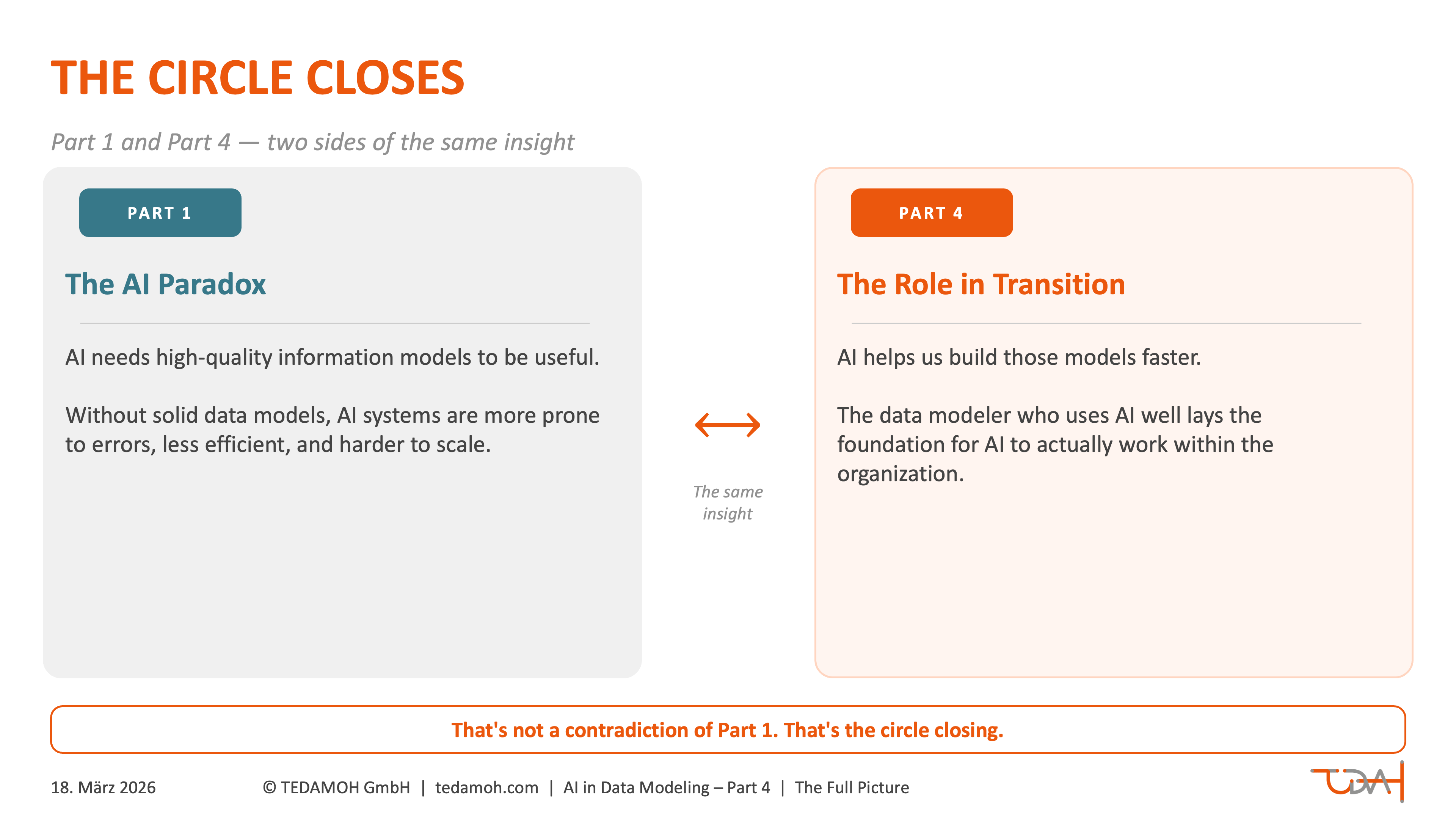

And one last thought, to close the loop: Part 1 of this series showed that AI needs high-quality information models to be useful. What's now clear is that AI helps us build exactly those models faster. The data modeler who uses AI well doesn't just deliver better models — they lay the foundation for AI to actually work within their organization. That's not a contradiction of Part 1. That's the circle closing.

So long,

Dirk